LLM Flashcards.

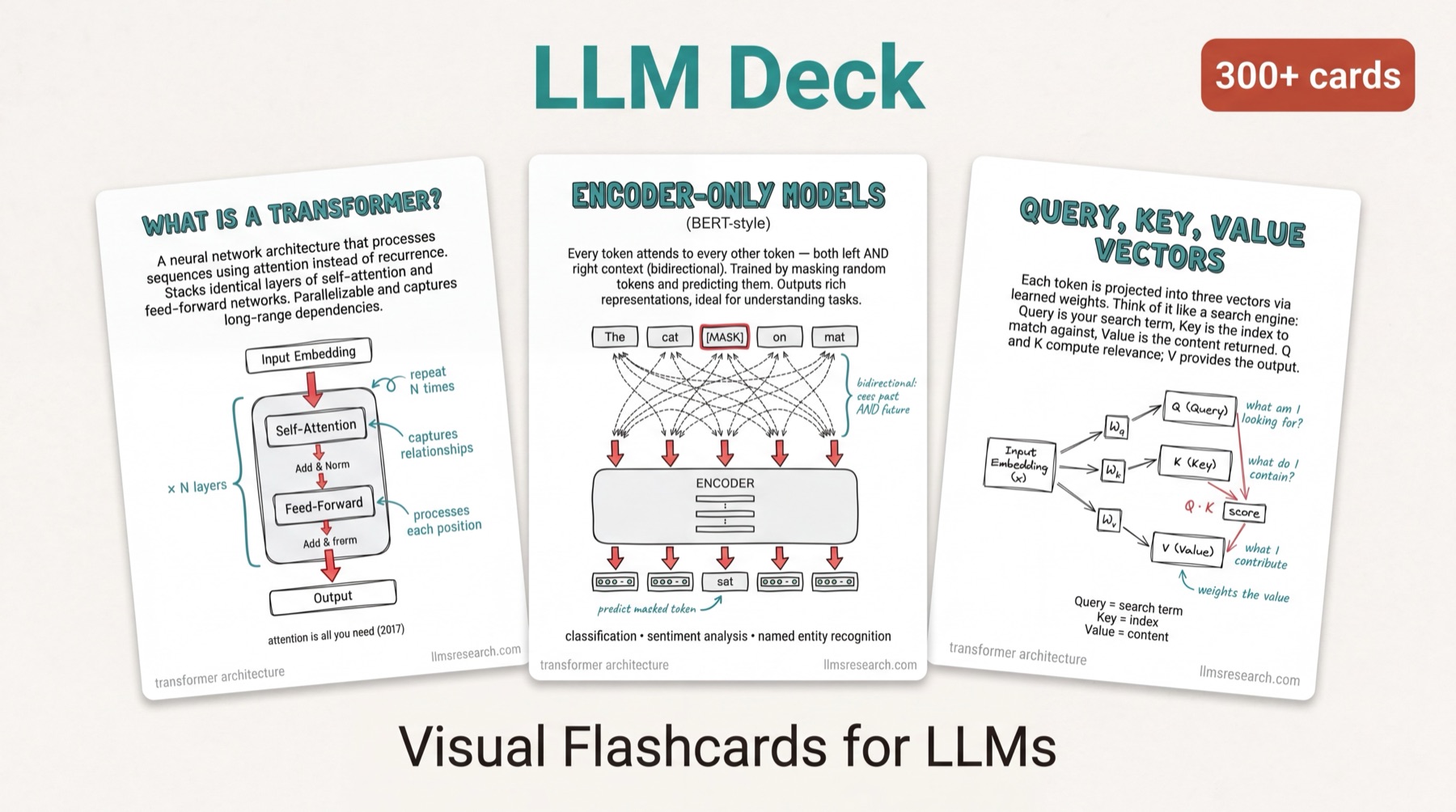

An illustrated deck for revising large language model concepts. Hand-drawn diagrams and plain-English explanations, designed for engineers prepping interviews and students revising for exams.

What’s in the deck

- I.Transformer architecture. Attention, FFN, layer norm, residuals.

- II.Attention variants. MHA, GQA, MQA, sparse, sliding-window.

- III.Tokenization & embeddings. BPE, positional encoding, RoPE.

- IV.Pretraining. Objectives, scaling laws, data mixtures.

- V.Fine-tuning & alignment. SFT, RLHF, DPO.

- VI.Retrieval & tools. RAG, agents, function calling.

- VII.Inference. KV cache, quantization, speculative decoding.

- VIII.Evaluation. Benchmarks, reasoning, contamination.